[ad_1]

Getty Photos

Microsoft introduced DirectStorage to Windows PCs this week. The API guarantees sooner load instances and extra detailed graphics by letting recreation builders make apps that load graphical knowledge from the SSD on to the GPU. Now, Nvidia and IBM have created the same SSD/GPU expertise, however they’re aiming it on the large knowledge units in knowledge facilities.

As an alternative of concentrating on console or PC gaming like DirectStorage, Large accelerator Reminiscence (BaM) is supposed to offer knowledge facilities fast entry to huge quantities of information in GPU-intensive functions, like machine-learning coaching, analytics, and high-performance computing, in line with a analysis paper noticed by The Register this week. Entitled “BaM: A Case for Enabling Superb-grain Excessive Throughput GPU-Orchestrated Entry to Storage” (PDF), the paper by researchers at Nvidia, IBM, and some US universities proposes a extra environment friendly strategy to run next-generation functions in knowledge facilities with large computing energy and reminiscence bandwidth.

BaM additionally differs from DirectStorage in that the creators of the system structure plan to make it open supply.

The paper says that whereas CPU-orchestrated storage knowledge entry is appropriate for “traditional” GPU functions, comparable to dense neural community coaching with “predefined, common, dense” knowledge entry patterns, it causes an excessive amount of “CPU-GPU synchronization overhead and/or I/O site visitors amplification.” That makes it much less appropriate for next-gen functions that make use of graph and knowledge analytics, recommender methods, graph neural networks, and different “fine-grain data-dependent entry patterns,” the authors write.

Like DirectStorage, BaM works alongside an NVMe SSD. In keeping with the paper, BaM “mitigates I/O site visitors amplification by enabling the GPU threads to learn or write small quantities of information on-demand, as decided by the pc.”

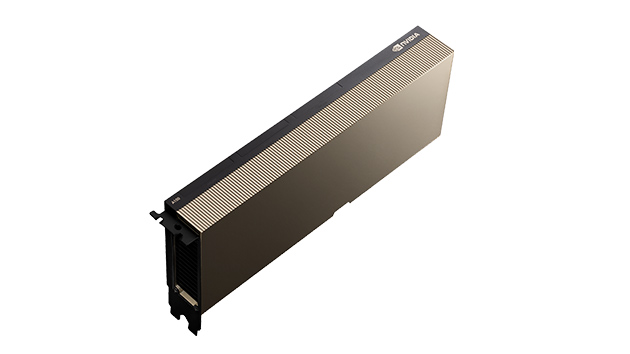

Extra particularly, BaM makes use of a GPU’s onboard reminiscence, which is software-managed cache, plus a GPU thread software program library. The threads obtain knowledge from the SSD and transfer it with the assistance of a customized Linux kernel driver. Researchers carried out testing on a prototype system with an Nvidia A100 40GB PCIe GPU, two AMD EPYC 7702 CPUs with 64 cores every, and 1TB of DDR4-3200 reminiscence. The system runs Ubuntu 20.04 LTS.

Researchers’ prototype system included an Nvidia A100 40GB PCIe GPU (pictured).

The authors famous that even a “consumer-grade” SSD might assist BaM with app efficiency that’s “aggressive towards a way more costly DRAM-only answer.”

Source link